What Anthropic Just Released

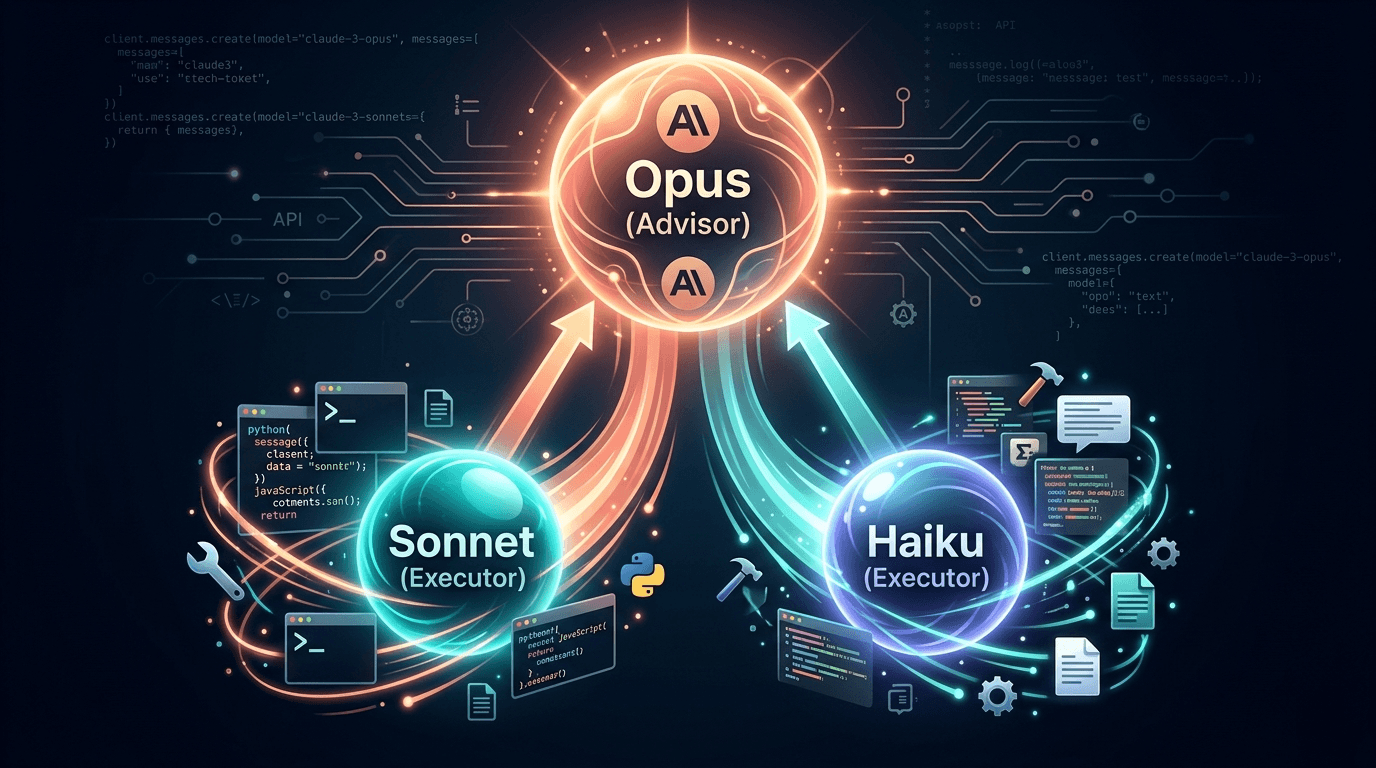

On April 9, 2026, Anthropic announced the advisor strategy — a native API tool that lets you pair Claude Opus 4.6 (expensive, brilliant) as an advisor with Claude Sonnet 4.6 or Haiku 4.5 (cheap, fast) as the executor.

The concept is deceptively simple: your executor model handles the entire task end-to-end. But when it hits a hard decision — an architectural choice, a debugging dead-end, a tricky edge case — it calls Opus for guidance. Opus reviews the full conversation context, returns a short plan or correction (typically 400–700 tokens), and the executor resumes work.

The best part? All of this happens inside a single `/v1/messages` API call. No orchestration code, no extra round-trips, no context management. You add one tool definition to your existing code and you are done.

The tool type is advisor_20260301, and it requires the beta header advisor-tool-2026-03-01.

"It makes better architectural decisions on complex tasks while adding no overhead on simple ones." — Eric Simmons, CEO, Bolt

This is not a prompt engineering hack or a complex multi-agent framework. It is a first-party Anthropic feature that works out of the box with the standard Messages API.

The Numbers: Why Developers Should Care

Let us start with the pricing that makes this strategy possible:

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Opus 4.6 | $15 | $75 |

| Sonnet 4.6 | $3 | $15 |

| Haiku 4.5 | $0.80 | $4 |

Opus is 5x more expensive than Sonnet on input and 5x on output. It is nearly 19x more expensive than Haiku on output. Those gaps are exactly why the advisor strategy works — you only pay Opus rates for the 400–700 tokens of advice, while the executor generates all the heavy output at its own cheaper rate.

Benchmark Results

Sonnet + Opus advisor:

- SWE-bench Multilingual: +2.7 percentage points vs. Sonnet solo

- Cost per agentic task: 11.9% reduction — it actually costs less than running Sonnet alone on some benchmarks because the executor makes fewer retries and takes more efficient paths

- Terminal-Bench 2.0 and BrowseComp: Improved scores while costing less than Sonnet solo

Haiku + Opus advisor:

- BrowseComp: 41.2% score vs. 19.7% for Haiku solo — more than double the performance

- Cost per task: 85% less than running Sonnet solo

- Trails Sonnet solo by 29% in score but at a fraction of the cost

The key insight from Anthropic: advisors generate only short plans of around 400–700 tokens. So the expensive Opus model is used very sparingly while the cheap executor handles all the bulk output.

"The advisor tool enables Haiku 4.5 to dynamically scale intelligence by consulting Opus 4.6 as complexity demands, matching frontier-model quality at 5x lower cost." — Anuraj Pandey, ML Engineer at Eve Legal

How to Implement It: Messages API

Here is the complete implementation. If you are already using the Claude Messages API, this is a one-line addition to your tools array.

Basic Python Example

import anthropic

client = anthropic.Anthropic()

response = client.beta.messages.create(

model="claude-sonnet-4-6", # Executor model (cheap)

max_tokens=4096,

betas=["advisor-tool-2026-03-01"],

tools=[

{

"type": "advisor_20260301",

"name": "advisor",

"model": "claude-opus-4-6", # Advisor model (smart)

"max_uses": 3, # Optional: cap advisor calls for cost control

}

],

messages=[

{

"role": "user",

"content": "Build a concurrent worker pool in Go with graceful shutdown.",

}

],

)TypeScript Example

import Anthropic from "@anthropic-ai/sdk";

const client = new Anthropic();

const response = await client.beta.messages.create({

model: "claude-sonnet-4-6",

max_tokens: 4096,

betas: ["advisor-tool-2026-03-01"],

tools: [

{

type: "advisor_20260301",

name: "advisor",

model: "claude-opus-4-6",

max_uses: 3,

}

],

messages: [

{ role: "user", content: "Build a concurrent worker pool in Go with graceful shutdown." }

],

});Key Implementation Details

- Beta header required:

anthropic-beta: advisor-tool-2026-03-01 - Tool type:

advisor_20260301— this is the magic string - `max_uses` parameter: Caps how many times Opus is consulted per request (cost control). Once reached, further advisor calls return an

advisor_tool_result_errorwitherror_code: "max_uses_exceeded" - Billing split: Advisor tokens charged at Opus rate, executor tokens at Sonnet/Haiku rate. Token usage is reported in

usage.iterations[]with separateadvisor_messageandmessageentries - `max_tokens` applies to executor output only — it does not bound advisor tokens

- Advisor output does NOT stream — expect a brief pause while the sub-inference runs. The stream resumes when the advisor result arrives

- No built-in conversation-level cap — track advisor calls client-side. When you hit your budget, remove the advisor from

toolsAND strip alladvisor_tool_resultblocks from message history (or you get a 400 error) - Priority Tier on the executor does NOT extend to the advisor — you need Priority Tier on both models separately

Multi-Turn Conversations

Pass the full assistant content (including advisor_tool_result blocks) back on subsequent turns:

# First turn

response = client.beta.messages.create(

model="claude-sonnet-4-6",

max_tokens=4096,

betas=["advisor-tool-2026-03-01"],

tools=tools,

messages=messages,

)

# Append full response (including advisor blocks)

messages.append({"role": "assistant", "content": response.content})

messages.append({"role": "user", "content": "Now add a max-in-flight limit of 10."})

# Second turn

response = client.beta.messages.create(

model="claude-sonnet-4-6",

max_tokens=4096,

betas=["advisor-tool-2026-03-01"],

tools=tools,

messages=messages,

)Combining With Other Tools

The advisor works alongside web search, code execution, and any custom tools:

tools = [

{"type": "web_search_20250305", "name": "web_search", "max_uses": 5},

{"type": "advisor_20260301", "name": "advisor", "model": "claude-opus-4-6"},

{"name": "run_bash", "description": "Run a bash command",

"input_schema": {"type": "object", "properties": {"command": {"type": "string"}}}},

]The executor can search the web, call the advisor, and use your custom tools in the same turn. The advisor's plan can inform which tools the executor reaches for next.

The Claude Code Trick: /model opusplan

If you use Claude Code (the CLI tool) rather than the API directly, there is a hidden command that gives you the same advisor-like benefit without writing any code.

Type this in your Claude Code terminal:

/model opusplanThis single command changes how Claude Code uses models:

- Plan mode (when Claude Code is analyzing, understanding, and designing): Uses Opus 4.6 for maximum reasoning

- Execution mode (when Claude Code is writing/editing code): Automatically switches to Sonnet 4.6 for speed and efficiency

Why This Matters for Claude Code Users

Claude Code does not charge per token — it uses session limits. Running everything on Opus burns through those limits fast. With /model opusplan:

- Opus-level reasoning for architecture decisions and task understanding

- Sonnet-level efficiency for the actual code generation

- In real-world testing, this produces comparable or even superior results to running Opus for everything, while consuming significantly less of your session budget

How to Use It

- Open Claude Code in your terminal

- Type

/model opusplanat the beginning of your session - Work normally — Claude Code handles the model switching automatically

- Opus activates only when you enter Plan mode (

/plancommand); all regular interactions use Sonnet

This is arguably the most practical immediate takeaway from this article for developers who are not building custom API integrations. Make it a habit to set /model opusplan at the beginning of each Claude Code session.

When to Use It (and When Not To)

The advisor strategy is not a universal improvement. Here is when it shines and when you should skip it.

Best Use Cases

- Complex agentic tasks with many tool calls — coding agents, research pipelines, multi-step automation. The advisor helps the executor make better strategic decisions early, reducing total retries and tool calls.

- High-volume tasks where cost matters — customer support bots, document processing, content generation at scale. Haiku + Opus advisor delivers strong quality at 85% less than Sonnet solo.

- Tasks with a mix of easy and hard steps — most real-world workflows have a few critical decision points surrounded by routine execution. The advisor activates only at the hard parts.

- Long-running autonomous agents — tools like OpenClaw can configure the advisor strategy for extended agentic runs.

When NOT to Use It

- Single-turn Q&A — nothing to plan, so there is no benefit. Just use the right model directly.

- Pure pass-through model pickers — if your users choose their own model, adding a hidden advisor creates confusing billing.

- Workloads where every turn requires Opus reasoning — if the task is uniformly hard, just use Opus directly.

- Simple prompts that Haiku/Sonnet handle perfectly — do not add overhead for tasks that already work.

Recommended Configurations (From Anthropic)

| Current Setup | Recommended Change | Expected Outcome |

|---|---|---|

| Sonnet on complex tasks | Add Opus advisor | Quality lift at similar or lower cost |

| Haiku, want better quality | Add Opus advisor | Higher than Haiku alone, much cheaper than Sonnet |

| Coding with Sonnet default effort | Sonnet medium effort + Opus advisor | Similar intelligence, lower cost |

| Maximum intelligence needed | Sonnet default effort + Opus advisor | Highest quality at below-Opus prices |

Real-World Cost Comparison

Here is what a typical complex agentic task costs across different configurations. Assumes 50,000 input tokens and 10,000 output tokens per task, with the advisor generating ~600 output tokens when consulted 2-3 times:

| Configuration | Benchmark Score (relative) | Cost Per Task | Savings vs. Opus |

|---|---|---|---|

| Opus 4.6 solo | 100% (baseline) | ~$1.50 | — |

| Sonnet 4.6 solo | ~94% | ~$0.30 | 80% |

| Sonnet + Opus advisor | ~97% | ~$0.26 | 83% |

| Haiku 4.5 solo | ~65% | ~$0.08 | 95% |

| Haiku + Opus advisor | ~82% | ~$0.12 | 92% |

The Sonnet + Opus advisor configuration is the standout: it actually costs less than Sonnet alone in many benchmarks (because smarter planning leads to fewer retries), while delivering quality that is within 3% of Opus.

For high-volume workloads, the Haiku + Opus advisor row is remarkable — you get 82% of Opus performance at 8% of its cost. At scale, that is the difference between a viable product and an unsustainable burn rate.

How the Billing Works

Tokens are tracked separately in the API response:

{

"usage": {

"input_tokens": 412,

"output_tokens": 531,

"iterations": [

{"type": "message", "input_tokens": 412, "output_tokens": 89},

{"type": "advisor_message", "model": "claude-opus-4-6",

"input_tokens": 823, "output_tokens": 1612},

{"type": "message", "input_tokens": 1348, "output_tokens": 442}

]

}

}Top-level usage fields reflect executor tokens only. The advisor_message iterations in the array are billed at Opus rates — use usage.iterations for accurate cost tracking.

The Bigger Picture: AI Agent Economics in 2026

The advisor strategy is part of a broader industry shift toward multi-model architectures for AI agents. The era of running every token through your most expensive model is ending.

The Industry Trend

- OpenAI has similar routing with GPT-5.4 mini/nano for different task complexities

- Google's Gemini 3.1 Flash-Lite targets the same "cheap bulk + smart escalation" pattern

- GLM-5.1 offers an alternative open-source executor for cost-sensitive deployments

- Meta Muse Spark takes a proprietary approach to model tiering

The advisor pattern could become the default architecture for production AI agents in 2026. Instead of choosing between "smart and expensive" or "cheap and limited," you get both — smart guidance on hard decisions, cheap execution everywhere else.

Connection to the OpenClaw Story

This also relates to the Anthropic blocking OpenClaw story. The advisor strategy gives API users a more efficient way to use Claude — which is exactly the kind of optimization that third-party agent frameworks like OpenClaw were building independently. Now it is a first-party feature.

What This Means for Developers

If you are building AI-powered products, the cost-per-task calculation just changed fundamentally. The advisor strategy means:

- You can ship Opus-quality features without Opus-level costs

- Haiku becomes viable for tasks that previously required Sonnet

- Agent architectures get simpler — no custom routing logic, no model picker, just one API call

- Cost optimization is no longer about choosing the cheapest model — it is about pairing models intelligently

At DevPik, we obsess over efficiency too — every tool runs 100% client-side with zero server costs and instant results. Try our 42+ free developer tools including JSON tools, CSS tools, and math tools.

Sources & Primary References

- Anthropic's Advisor strategy announcement — the primary source describing how to pair Opus and Sonnet in production workflows.

- Anthropic's Claude pricing page — current token pricing and tier comparison for Opus and Sonnet.

- Anthropic API documentation — reference docs for tool use, caching, and streaming that underpin the Advisor pattern.

- Anthropic on model deprecation and migration — the canonical list of current and deprecated Claude models.