The Bombshell: 6,852 Sessions That Broke the Trust

On April 2, 2026, Stella Laurenzo — Senior Director of AI at AMD — opened GitHub issue #42796 on the official Claude Code repository. The title was clinical. The conclusion was anything but: "Claude cannot be trusted to perform complex engineering tasks."

Laurenzo did not write a rant. She wrote a forensics report. Her team had been logging every Claude Code session their AMD engineers ran since January 2026 — 6,852 session JSONL files containing 17,871 thinking blocks, 234,760 tool calls, and over 18,000 user prompts across four open-source projects. She then ran statistical analysis against that corpus to measure exactly how Claude Code''s behavior changed week by week.

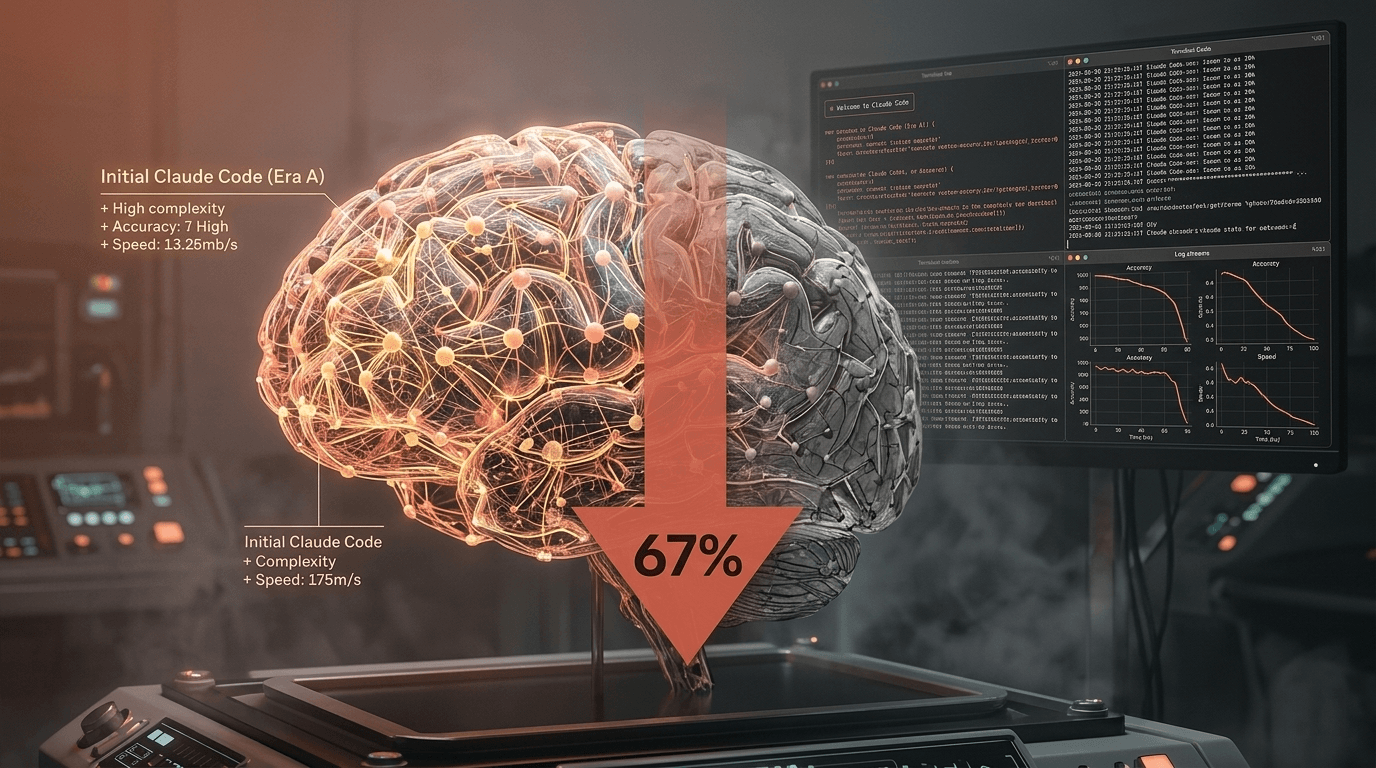

The headline number that lit the internet on fire: thinking depth dropped roughly 67% by late February 2026 — weeks before Anthropic publicly changed anything users could see.

She ended the post with the line that made AMD''s decision public: her team was switching AI coding providers. Within six days, the issue had 128 comments, The Register, WinBuzzer, DEV Community, and InfoWorld had picked it up, the Reddit thread had crossed 1,060 upvotes, and Boris Cherny — head of the Claude Code team at Anthropic — was personally responding on Hacker News.

This is not the usual "vibes" complaint about an AI getting worse. This is a senior AI executive at one of the world''s largest semiconductor companies publishing receipts.

The Data: What 17,871 Thinking Blocks Revealed

Laurenzo''s analysis used a Pearson correlation of 0.971 between feature fields in the thinking blocks and actual reasoning content length. In plain English: she had a near-perfect proxy for how hard Claude was actually thinking on each turn, even after Anthropic later started hiding the reasoning text from users.

Here is what the 6,852 sessions showed.

Thinking depth collapsed

- Late January 2026 baseline: deep, multi-step reasoning on complex turns

- Late February 2026: thinking depth down ~67% from baseline

- Early March 2026: down ~75% from baseline at peak degradation

- Post-March 12 (after redaction rolled out): still ~73% below baseline

The key finding here is the timing. The depth was already gone before Anthropic did anything visible.

Research behavior was destroyed

The most damning metric for anyone who actually ships software:

- File reads per edit: dropped from 6.6 to 2.0 — a 70% reduction

- Edits made with zero prior file reads: jumped from 6.2% to 33.7% — a +443% increase

- Full-file rewrites (instead of surgical edits): rose from 4.9% to 11.1% — +127%

Before the regression, Claude Code behaved like a careful senior engineer: read the target file, read related files, grep for usages, check headers and tests, then make a precise edit. After: edit blind and hope. Or worse — rewrite the entire file because it could not be bothered to find the right diff.

New failure modes appeared overnight

Laurenzo''s team had built a stop hook — a small script that fires whenever Claude Code prematurely stops, dodges ownership of a problem, or asks for permission it does not need. It is a behavioral tripwire.

- Before March 8, 2026: the stop hook fired 0 times

- March 8 through March 25: the stop hook fired 173 times

- User interrupts per 1,000 tool calls: rose from 0.9 to 11.4 — a +1,167% increase

Her logs also captured the model fabricating commit SHAs that did not exist, inventing package names that were never published, and citing API methods that had never shipped. None of this was happening at scale in January.

The cost cliff

This is what made AMD''s engineering leadership pull the cord. Their Claude Code spend went from:

- February 2026: $345

- March 2026: $42,121

That is a 122x increase in a single month — 80x more API requests, 64x more output tokens. The dumber the model got, the more retry loops, correction cycles, and full-file rewrites it generated. AMD had scaled from running 1–3 concurrent Claude Code agents to 5–10. After the regression hit, the agents started cascading failures into each other. The team had to shut down their entire agent cluster and revert to single-session mode. Even then, the output quality kept slipping.

The Two Silent Changes That Broke Claude Code

Anthropic did not publish a blog post saying "we made Claude Code think less." They shipped two quiet changes inside Opus 4.6 release notes — and one configuration default flip — that combined to produce the 67% collapse.

Change #1: Adaptive Thinking (February 9, 2026)

With the launch of Claude Opus 4.6, Anthropic introduced Adaptive Thinking: instead of using a fixed reasoning budget, the model itself decides how much thinking to allocate per turn.

In theory, this is elegant. Easy turns get short reasoning. Hard turns get deep reasoning. Token spend goes down. Latency goes down. Everyone wins.

In practice, Boris Cherny later confirmed on Hacker News what Laurenzo''s data already showed: on certain turns, Adaptive Thinking allocated *zero* reasoning tokens. Cherny''s exact words: "The specific turns where it fabricated (stripe API version, git SHA suffix, apt package list) had zero reasoning emitted, while the turns with deep reasoning were correct."

The model was deciding to skip thinking entirely on turns where thinking was the only thing standing between a correct answer and a hallucination.

Change #2: Default Effort Lowered to Medium (March 3, 2026)

Claude Code has a per-session effort parameter that controls the reasoning budget ceiling — low, medium, high, or max. Until early March 2026, the default was high.

On March 3, Anthropic silently flipped the default to `medium` — without a release note, without a changelog entry visible to users.

Combine the two changes:

- The model''s ceiling was lowered from

hightomedium - Adaptive Thinking lets the model decide to skip thinking entirely under that lower ceiling

Result: the Claude Code you opened on March 4 was structurally a different product than the one you used on March 2. Same name. Same UI. Same price. Different brain.

The March Redaction Made the Damage Invisible

Between March 4 and March 12, Anthropic rolled out a third change: thinking content redaction. Users could no longer see the model''s reasoning in the Claude Code UI. The rollout was gradual — and Laurenzo''s logs captured every stage of it:

| Date | % of thinking content visible |

|---|---|

| March 4 | 100% |

| March 5 | 98.5% |

| March 7 | 75.3% |

| March 8 | 41.6% |

| March 10–11 | <1% |

| March 12+ | 0% |

From March 12 onward, Claude Code users could not see what (or whether) the model was thinking. They saw a placeholder that the model had reasoned about the task, then the answer.

Here is the part that makes developers furious: the 67% thinking depth drop had already happened by late February — before the redaction rolled out. The redaction did not cause the regression. It made the regression unverifiable.

Up until March 4, anyone running Claude Code could have seen exactly what Laurenzo eventually proved with statistics. After March 12, you needed JSONL session logs and a Pearson correlation analysis to even notice.

Anthropic''s Response (and Why Developers Aren''t Buying It)

Boris Cherny, who created Claude Code and leads the team at Anthropic, responded on both GitHub and Hacker News within hours of the issue blowing up.

His core points:

- The thinking redaction is "purely UI-level." It hides reasoning to make responses faster and cleaner; it does not change the model''s actual reasoning logic, thinking budget, or underlying mechanisms.

- The Adaptive Thinking zero-reasoning behavior is a real bug, and Anthropic acknowledged the fabrication issue Laurenzo flagged. He confirmed that turns with zero emitted reasoning correlated with the hallucinated SHAs and package names.

- The August–September 2025 quality complaints had a separate root cause: a routing error that sent requests to the wrong inference servers. At peak, the error affected up to 16% of Sonnet 4 requests and at least one misrouted message hit 30% of Claude Code users during the affected window.

- The fix exists today. Setting

CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING=1forces a fixed reasoning budget instead of letting the model decide per-turn.

The community reaction was not gracious. The most-upvoted Hacker News reply called the "purely UI-level" framing dismissive: if a developer cannot see the reasoning, they cannot evaluate it, cannot debug a wrong answer, and cannot tell whether the model thought for two seconds or two hundred milliseconds. The redaction is a product change, even if the inference path is identical.

The deeper grievance: Anthropic shipped Adaptive Thinking, lowered the default effort, and rolled out reasoning redaction in roughly five weeks — without a single user-facing release note that described the combined behavioral effect. Anthropic''s own stated position is that the company "never reduces model quality due to demand, time of day, or server load." Laurenzo''s data does not technically contradict that — but it does prove that quality changed dramatically without disclosure.

The Fix: 6 Things You Can Do Right Now

If you use Claude Code daily, do these in order. The first two are mandatory. The rest are good hygiene that compounds.

Fix 1 — Force max effort

In any Claude Code session, type:

/effort maxThis forces the model to use the maximum reasoning budget for every turn. It overrides the March 3 default downgrade. You will pay slightly more in tokens; you will get back the depth that disappeared in February.

Fix 2 — Disable Adaptive Thinking

Set this environment variable in your shell profile (.zshrc, .bashrc, or ~/.config/fish/config.fish):

export CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING=1Then restart your terminal. This stops the model from deciding to skip reasoning on a per-turn basis. Boris Cherny himself confirmed this is the recommended workaround until Anthropic ships a permanent fix.

These two changes alone restore most of the lost behavior. Do them now.

Fix 3 — Use /compact and /clear aggressively

Long sessions cause context rot: Claude Code performance degrades as input tokens grow. Run /compact at natural task boundaries (finished a module? compact). Use /clear when switching to an unrelated task. Do this before you notice quality slipping — by the time you notice, you have already wasted a turn or three.

Fix 4 — Keep sessions short

Start a fresh conversation every 30–45 minutes of active work. Keep a CLAUDE.md in your project root with conventions, stack details, and current task state, so a new session loads with the right context immediately. The leaked Claude Code source we covered earlier confirms the agent reads CLAUDE.md on every fresh launch.

Fix 5 — Time your sessions

Laurenzo''s logs and dozens of corroborating Reddit reports point to the same pattern:

- Best performance: late night / early morning US time

- Worst performance: weekday afternoons, 12–4 PM Pacific (peak US load)

More concurrent users means more load balancing, more routing variance, and more chance of hitting whatever degraded path triggered the August routing incident.

Fix 6 — Have a Plan B

If Claude Code is on your critical path, do not be single-vendor. Realistic alternatives in April 2026:

- GLM-5.1 — MIT-licensed, runs 8-hour autonomous coding sessions, no rate limits, no silent model changes

- GPT-5.4 Computer Use — strong for desktop-level agent tasks; can be wired into Claude Code via the Advisor Strategy

- OpenClaw — third-party Claude wrapper with its own reasoning controls (after Anthropic''s ban, the team shipped its biggest update ever)

- DeepSeek V4 / Qwen3-Coder-Next — open-source, runnable locally, no external degradation risk

The Bigger Picture: AI Tools Are a Trust Problem

The 67% number is going to be the headline. The headline is not the story. The story is what this incident reveals about the entire category of AI coding tools in 2026.

Anthropic is not uniquely guilty. Quality complaints about Claude Code go back to September 2025, and we covered the silent-downgrade pattern when OpenAI did something similar with GPT model routing earlier this year. January 2026 saw widespread reports of "silent model downgrading" and reduced token limits across providers. March 2026 saw Anthropic ship 14 product releases and absorb 5 outages in a single month while revenue climbed 5.5x and usage grew 300% since the Claude 4 launch. Release velocity is outpacing quality assurance industry-wide.

Adaptive Thinking is the right idea, badly shipped. Letting a model decide its own reasoning budget per turn is a real efficiency win. But the shipping bar should have been: "the floor is non-zero on hard turns." Anthropic shipped it with a floor of zero. That is the bug. The 67% thinking drop is the symptom.

The deeper issue is verifiability. We have built engineering workflows around tools where we cannot independently verify output quality. When a 6,852-session statistical analysis is the minimum effort required to prove a regression, the average developer has no chance. The redaction rollout — whatever the technical justification — moved the verification bar higher, not lower.

What developers are asking Anthropic for is not magic. It is predictability:

- Advance notice before model changes that affect output quality

- Versioned model snapshots that can be pinned and rolled back

- A "do not auto-update my model" flag for production users

- Honest changelogs when behavior shifts, even if performance is theoretically improved

As one developer put it on Reddit: "We don''t want magic. We want predictability."

That is the entire fight in one sentence.

---

At DevPik, our tools don''t silently degrade — they run the same way every time, 100% client-side in your browser. No model updates, no hidden changes, no surprises. Try our 44+ free developer tools, share code instantly with the Code Share tool, or format and validate JSON with the JSON Formatter.

Sources & Primary References

- Anthropic's Claude Code GitHub issue tracker — the official issue queue where the degradation was reported and Anthropic responded.

- Anthropic's status page — the canonical source for Claude model-availability and performance incidents.

- Anthropic's official Claude Code documentation — the latest documented behaviour you can diff against older versions.

- Hacker News discussion archive — independent developer commentary on the incident.